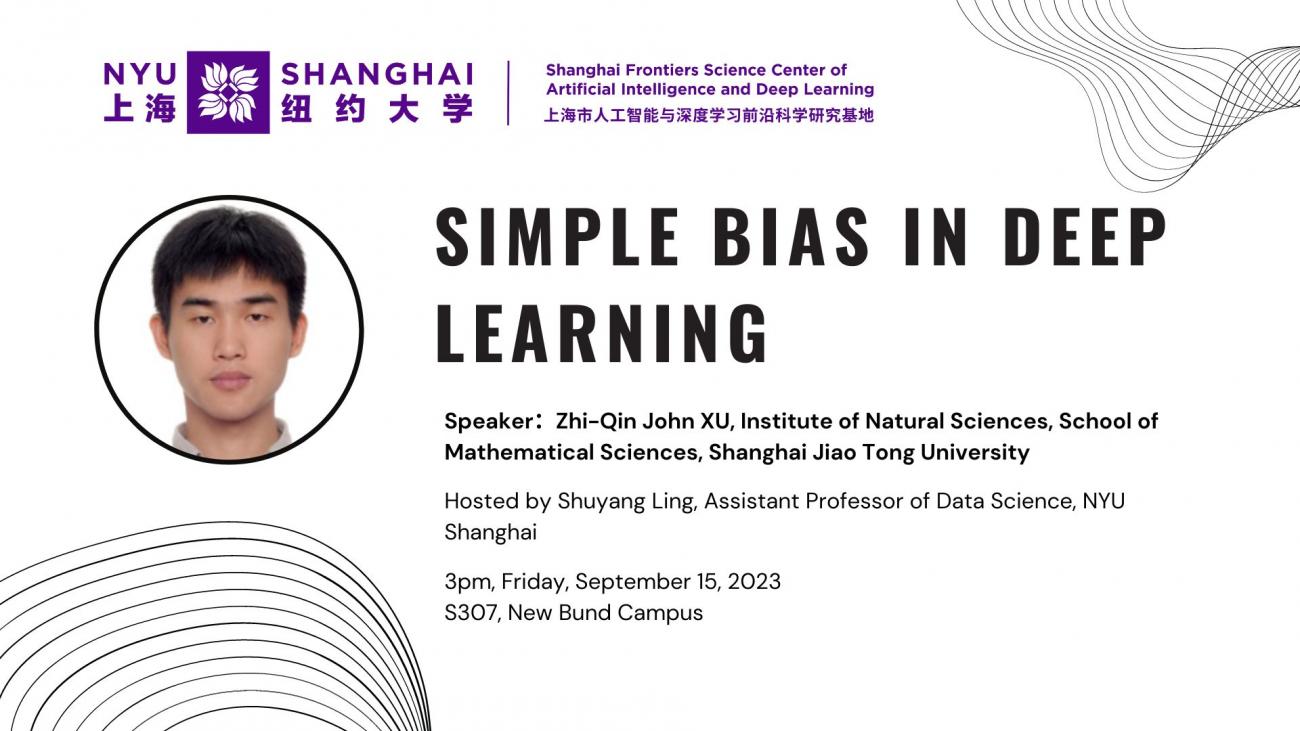

Spkear's bio: Zhi-Qin John Xu is an associate professor at Shanghai Jiao Tong University (SJTU). Zhi-Qin obtained B.S. in Physics (2012) and a Ph.D. degree in Mathematics (2016) from SJTU. Before joining SJTU, Zhi-Qin worked as a postdoc at NYUAD and Courant Institute from 2016 to 2019. He published papers on JMLR, AAAI, NeurIPS, JCP, CiCP, SIMODS etc. He is a managing editor of Journal of Machine Learning.

Abstract: Why do neural networks (NN) that look so complex usually generalize well? To understand this problem, we find some simple implicit bias during training NNs. The first is the frequency principle that NNs learn from low frequency to high frequency. The second is the parameter condensation, a feature of non-linear training process, which makes the network size effectively much smaller.