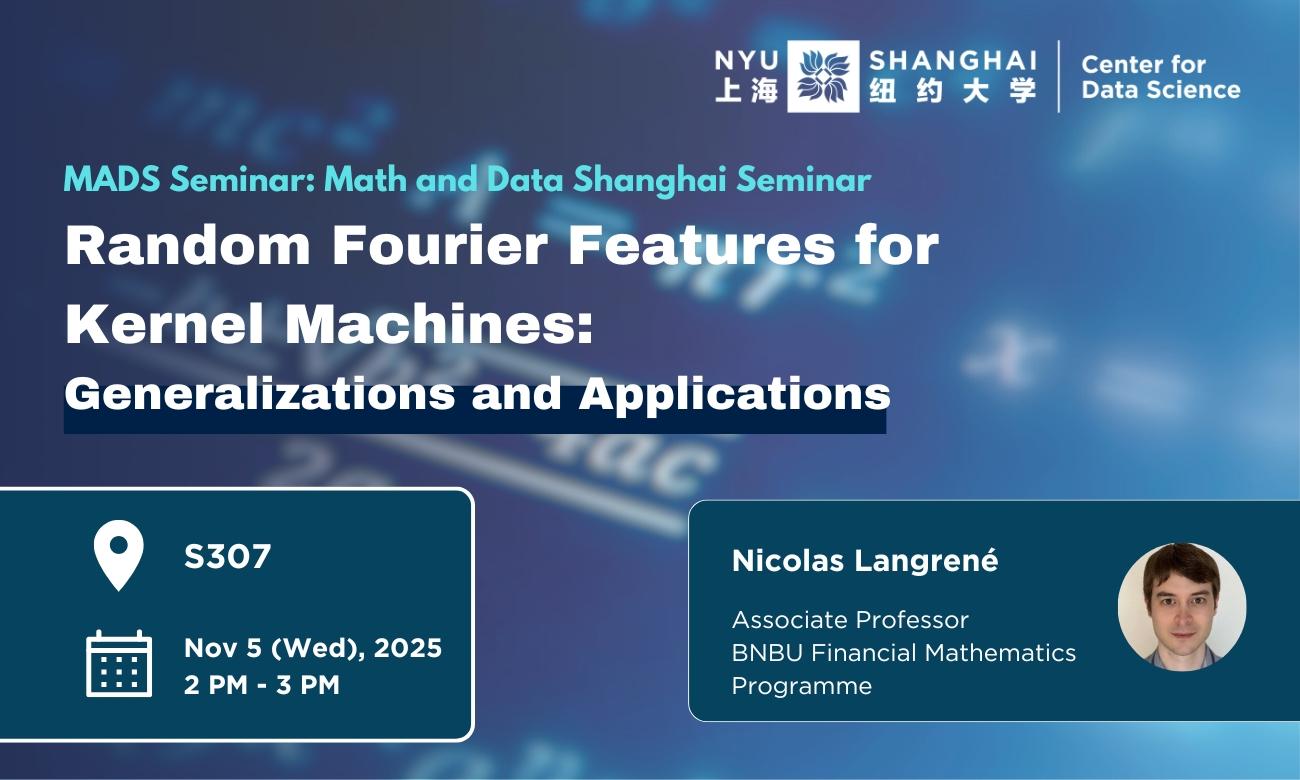

Abstract:

Kernel machines are a powerful but computationally expensive class of machine learning algorithms for pattern analysis. To speed up computations, numerical approximations of kernel functions can be used. One popular approximation, called random Fourier features (RFF), provides an explicit approximate feature mapping based on Monte Carlo simulations of the spectral density of the positive definite kernel function under consideration. The RFF technique is generally applied to the multivariate Gaussian or Laplacian kernels, due to their analytical tractability. In this talk, I will show how to generalize the RFF methodology to much larger classes of kernel functions. I will establish a simple spectral mixture representation which greatly simplifies the construction of RFFs for any positive definite kernel function with infinite support, such as generalized Matérn kernels and generalized Cauchy kernels, with applications in spatial statistics and stochastic modelling with long memory. Another spectral mixture representation makes it possible to apply the RFF methodology to positive definite kernels with compact support, such as generalized Wendland kernels and stationary fractional Brownian motion kernels. Finally, I will show how to extend the spectral sampling approach of RFF to non-positive definite kernels, with application to fast multivariate density estimation.